Anthropic Releases Claude Opus 4.7 With Weakened Cyber Capabilities

Anthropic released an upgraded version of its flagship model, Claude Opus 4.7, on April 16 (local time). Compared to the previous Opus 4.6 model, Opus 4.7 demonstrates “significant improvements” in advanced software engineering capabilities, particularly on difficult tasks, with enhanced rigor and consistency in complex, long-running operations and improved vision abilities. However, Anthropic deliberately weakened the model’s cybersecurity attack-defense capabilities during training and introduced safety mechanisms to automatically detect and block prohibited or high-risk requests.

Performance and Benchmarks

In benchmark testing, Opus 4.7 achieved scores generally higher than the previous Opus 4.6 and competitor GPT-5.4. However, Anthropic emphasized that Opus 4.7’s overall capabilities do not match the company’s most powerful model, Claude Mythos Preview. According to Anthropic: “By deploying and operating these protective mechanisms in the real world, we will accumulate experience to ultimately enable broader release of Mythos-level models.”

Deployment and Pricing

Opus 4.7 is now live across all Claude products and API interfaces, integrated with Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry services. Pricing remains consistent with Opus 4.6: $5 per million input tokens and $25 per million output tokens.

Token Consumption Changes

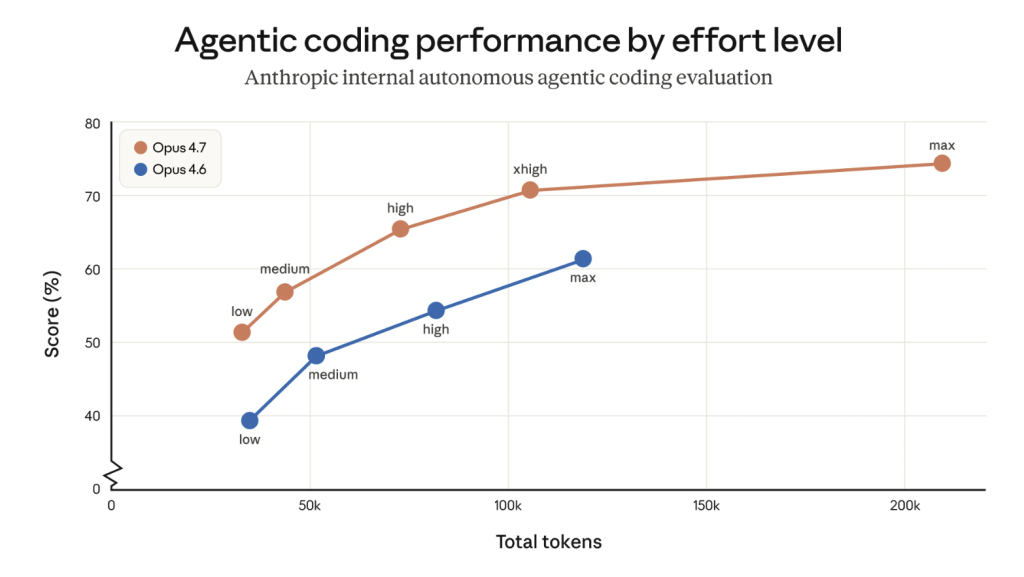

Two changes in Opus 4.7 compared to Opus 4.6 will affect token usage. First, Opus 4.7 uses an updated tokenizer, improving how the model processes text. However, this means identical inputs may consume more tokens—approximately 1 to 1.35 times the previous generation’s consumption.

Second, Opus 4.7 performs more reasoning at higher “thinking intensity,” particularly in subsequent rounds of agentic scenarios. This improves reliability on complex problems but generates additional output tokens.

Market Analysis and Context

Analysts characterize Opus 4.7 as a “transitional” model. Investment analyst Adam Button noted that the Opus 4.7 release reinforces Anthropic’s narrative around “godlike models” like Mythos and confirms market skepticism: publicly available paid models are essentially “lite” versions constrained by safety mechanisms.

Company Background and Financial Milestone

Anthropic, founded in 2021 by former OpenAI employees, develops the Claude series of large language models. On April 6, Anthropic announced its annualized revenue (ARR) exceeded $300 billion, a significant increase from $9 billion at the end of 2025. The company is actively pursuing an initial public offering.

Cybersecurity Risk Concerns

Anthropic executives have repeatedly warned about AI’s impact on cybersecurity. According to reports dated April 10 (local time), U.S. Treasury Secretary Yellen and Federal Reserve Chair Powell held an emergency meeting with Wall Street leaders on April 7 to discuss how Anthropic’s latest Mythos AI model could heighten cybersecurity risks. Anthropic has stated that Mythos is not suitable for public release because the model could be misused by cybercriminals and spies. The company is selectively providing access to Mythos to leading global cybersecurity and software enterprises.